I could write a lot about what a MySQL cluster means, but the video below is probably much better.

Use Cases

The reason I'm interested in it is the ability to have high availability/redundancy whilst not losing ACID (Atomic, Consistency, Isolation, Durability) transactions. E.g. I don't want my database taken offline by a single DDOS attack

Another use case may be that you can exploit services like Digital Ocean being most cost-effective for small instances. E.g. if your database is larger than 60GB in size, it is probably cheaper to run a cluster than to use one large instance, and you get all the benefits of a cluster.

Node Types

Cluster Manager

The cluster manager is responsible for managing the cluster (who knew!?). It will detect if one of the nodes goes offline and keep the cluster working by having the other nodes take over and synchronizing the data back when the failed node comes back online. If the management nodes all go offline, then a "Split-Brain" is likely to arise with inconsistent data between the working databases. By having more than one management node, you prevent the management area acting as a single point of failure for your cluster.

MySQL Proxy

The MySql proxy basically passes the queries onto the databases which allows you to make those databases publicly inaccessable for security. If there is more than one database that it is hooked up to, then it will act like a load balancer by passing the queries on in a round-robin fashion. The proxy automatically identifies when a server becomes unavailable and marks it accordingly, thus providing failover functionality. By having multiple proxys, your load-balancing might be less "perfect", but you gain high availability (e.g. what happens if you had only one proxy and it goes down?). [ reference material ]

Database Node

The workhorse that stores the data and actually handles the queries. This is what most people think of when it comes to the database.

MySQL Cluster is implemented through the NDB or NDBCLUSTER storage engine for MySQL (“NDB” stands for Network Database). This means you won't be running InnoDB storage engine.

Steps

Install Management Nodes

sudo mkdir /tmp/mysql-mgm cd /tmp/mysql-mgm

wget [url here]

sudo tar xvfz mysql-cluster*.tar.gz

cd mysql-cluster-* sudo cp bin/ndb_mgm /usr/bin/. sudo cp bin/ndb_mgmd /usr/bin/.

sudo chmod 755 /usr/bin/ndb_mg* cd /usr/src sudo rm -rf /usr/src/mysql-mgm

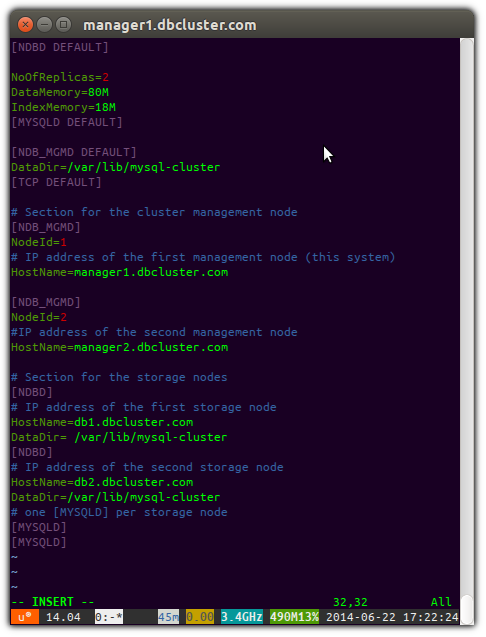

sudo mkdir /var/lib/mysql-cluster sudo editor /var/lib/mysql-cluster/config.ini

[NDBD DEFAULT] NoOfReplicas=2 DataMemory=80M IndexMemory=18M [MYSQLD DEFAULT] [NDB_MGMD DEFAULT] DataDir=/var/lib/mysql-cluster [TCP DEFAULT] # Section for the cluster management node [NDB_MGMD] NodeId=1 # IP address of the first management node (this system) HostName=$MANAGER1_IP_OR_HOSTNAME [NDB_MGMD] NodeId=2 #IP address of the second management node HostName=$MANAGER2_IP_OR_HOSTNAME # Section for the storage nodes [NDBD] # IP address of the first storage node HostName=$DB1_IP_OR_HOSTNAME DataDir= /var/lib/mysql-cluster [NDBD] # IP address of the second storage node HostName=$DB2_IP_OR_HOSTNAME DataDir=/var/lib/mysql-cluster # one [MYSQLD] per storage node [MYSQLD] [MYSQLD]

[ How My Config Looks ]

sudo ndb_mgmd -f /var/lib/mysql-cluster/config.ini --configdir=/var/lib/mysql-cluster/

sudo su crontab -l > /tmp/mycron echo "@reboot ndb_mgmd -f /var/lib/mysql-cluster/config.ini --configdir=/var/lib/mysql-cluster/" >> /tmp/mycron crontab /tmp/mycron

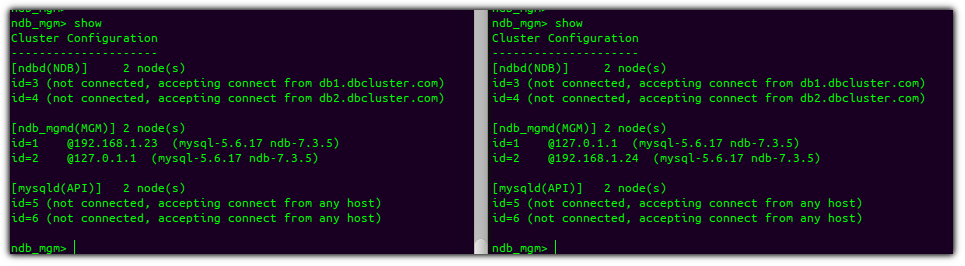

ndb_mgm show

[ You should see something like the following on your nodes. ]

Install The Storage/Database Nodes

sudo useradd mysql

cd /usr/local sudo wget [download link here] sudo tar xvfz mysql-cluster-* sudo ln -s mysql-cluster-gpl-[version number]-linux-glibc2.5-x86_64 mysql cd mysql sudo apt-get install libaio1 libaio-dev sudo scripts/mysql_install_db --user=mysql --datadir=/usr/local/mysql/data # Change the owner to the newly created mysql group sudo chown -R root:mysql . sudo chown -R mysql data

sudo cp support-files/mysql.server /etc/init.d/. sudo chmod 755 /etc/init.d/mysql.server

cd /usr/local/mysql/bin sudo mv * /usr/bin cd .. sudo rm -rf /usr/local/mysql/bin sudo ln -s /usr/bin /usr/local/mysql/bin

echo '[mysqld] bind-address = 0.0.0.0 ndbcluster # IP address of the cluster management node ndb-connectstring=$db-manager1,$db-manager2 [mysql_cluster] # IP address of the cluster management node ndb-connectstring=$db-manager1,$db-manager2' | sudo tee /etc/my.cnf

sudo mkdir /var/lib/mysql-cluster

cd /var/lib/mysql-cluster sudo ndbd –-initial sudo /etc/init.d/mysql.server start

sudo /usr/local/mysql/bin/mysql_secure_installation

sudo su crontab -l > /tmp/mycron echo "@reboot ndbd" >> /tmp/mycron crontab /tmp/mycron

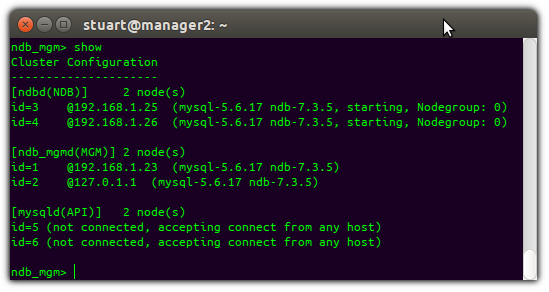

ndb_mgm show

[ You should see something like above ]

Install Proxies

sudo apt-get install mysql-proxy sudo mkdir /etc/mysql-proxy

echo '[mysql-proxy] daemon = true proxy-address = $THIS_PROXIES_IP:3306 proxy-skip-profiling = true keepalive = true event-threads = 50 pid-file = /var/run/mysql-proxy.pid log-file = /var/log/mysql-proxy.log log-level = debug proxy-backend-addresses = $storage1_hostname_or_IP:3306,$storage2_hostname_or_IP:3306 proxy-lua-script=/usr/lib/mysql-proxy/lua/proxy/balance.lua' | sudo tee /etc/mysql-proxy/mysql-proxy.conf

echo 'ENABLED="true" OPTIONS="--defaults-file=/etc/mysql-proxy.conf --plugins=proxy"' | sudo tee /etc/default/mysql-proxy

/etc/init.d/mysql-proxy start/stop/status

No comments:

Post a Comment